|

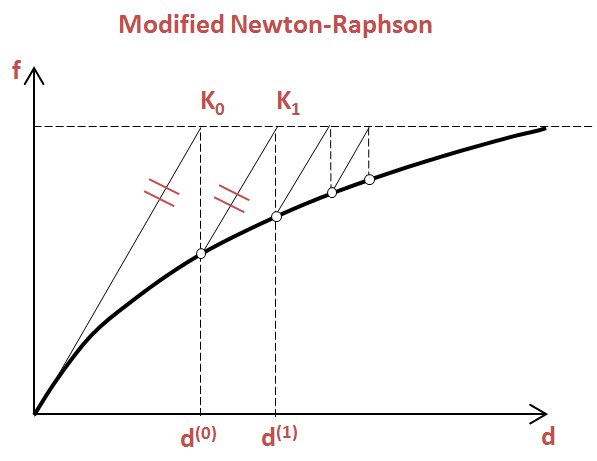

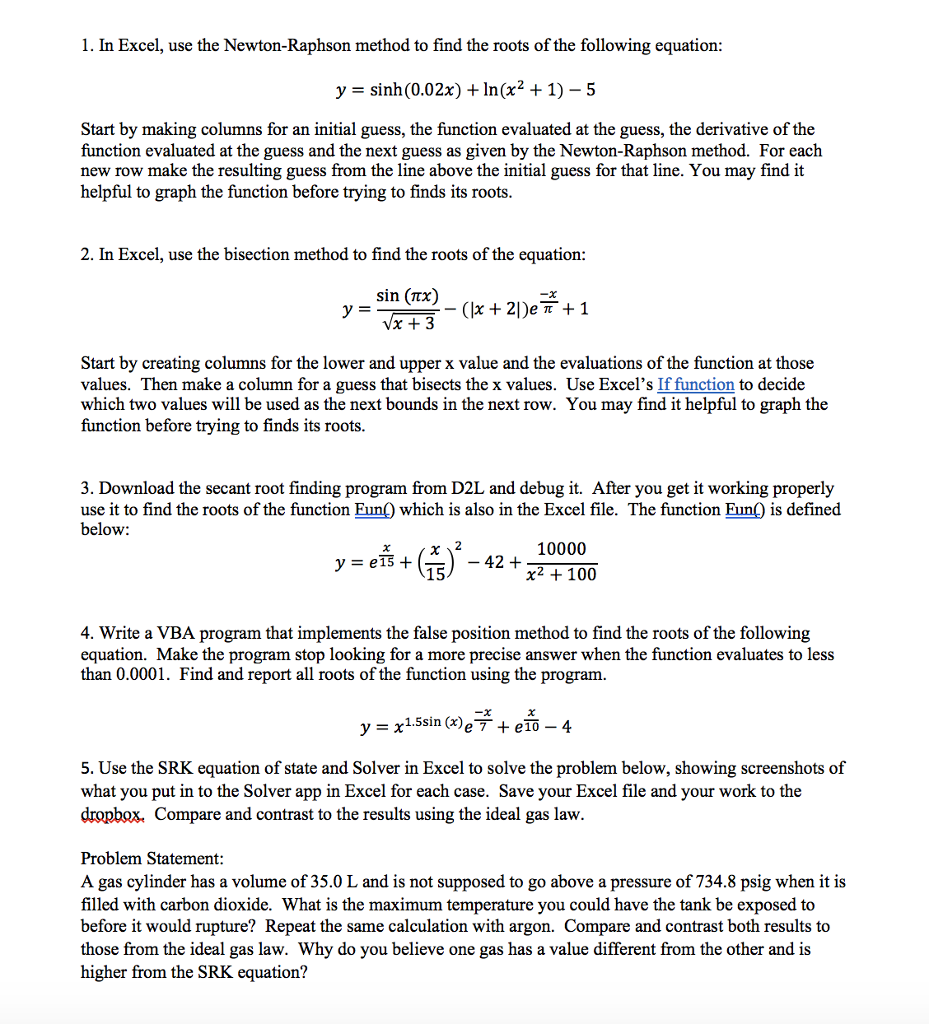

Barring these details, the algorithm to approximate $d^$. The algorithm is obfuscated a bit (among other things) because the GTE works exclusively with fixed point numbers. How do I go about finding that initial guess of 0.1f? If I set it to 0.5f, I would get -217.839 in 3 iterations.Ĭode: float GetRecip(float Number, float InitialGuess, int Iterations)įloat Recip1 = GetRecip(7, 0.1f, 3) // 0.142847776įloat Recip2 = GetRecip(7, 0.5f, 3) // -217.839844Ĭhanging the number of iterations doesn't help, it would yield more drastic different results. I found that if I set it to anything else, I would get totally different results. In his example, he set it to 0.1f to find the reciprocal for 7. But then as I started writing the code to get the reciprocal, I wasn't sure how to assign the initial guess value. Which is all fine and dandy, makes sense. Trying to understand the basic algorithm I came across this video. They used a modified version of the algorithm. I need this in order to accurately emulate how the PlayStation 1 does the divide. I'm learning Newton-Raphson to get the reciprocal of any arbitrary value. This method, also known as the tangent method, considers tangents drawn at the initial approximations, which gradually lead to the real root. add_subplot ( 1, 2, 1, projection = '3d' ) ax. The Newton-Raphson method was named after Newton and Joseph Raphson. figure ( figsize = ( 16, 8 )) #Surface plot ax = fig. meshgrid ( x, y ) Z = Himmer ( X, Y ) #Angles needed for quiver plot anglesx = iter_x_gd - iter_x_gd anglesy = iter_y_gd - iter_y_gd anglesx_nr = iter_x_nr - iter_x_nr anglesy_nr = iter_y_nr - iter_y_nr % matplotlib inlineįig = plt. set_title ( 'Comparing Newton and Gradient descent' ) plt. quiver ( iter_x_nr, iter_y_nr, anglesx_nr, anglesy_nr, scale_units = 'xy', angles = 'xy', scale = 1, color = 'darkblue', alpha =. scatter ( iter_x_nr, iter_y_nr, color = 'darkblue', marker = 'o', label = 'Newton' ) ax. quiver ( iter_x_gd, iter_y_gd, anglesx, anglesy, scale_units = 'xy', angles = 'xy', scale = 1, color = 'r', alpha =. scatter ( iter_x_gd, iter_y_gd, color = 'r', marker = '*', label = 'Gradient descent' ) ax. contour ( X, Y, Z, 60, cmap = 'jet' ) #Plotting the iterations and intermediate values ax. set_ylabel ( 'y' ) #Contour plot ax = fig. legend () #Rotate the initialization to help viewing the graph #ax.view_init(45, 280) ax. plot ( iter_x_nr, iter_y_nr, f_2 ( iter_x_nr, iter_y_nr ), color = 'darkblue', marker = 'o', alpha =.

plot ( iter_x_gd, iter_y_gd, f_2 ( iter_x_gd, iter_y_gd ), color = 'r', marker = '*', alpha =. plot_surface ( X, Y, Z, rstride = 5, cstride = 5, cmap = 'jet', alpha =. add_subplot ( 1, 2, 1, projection = '3d' ) ax. meshgrid ( x, y ) Z = f_2 ( X, Y ) #Angles needed for quiver plot anglesx = iter_x_gd - iter_x_gd anglesy = iter_y_gd - iter_y_gd anglesx_nr = iter_x_nr - iter_x_nr anglesy_nr = iter_y_nr - iter_y_nr % matplotlib inlineįig = plt. Root_gd, iter_x_gd, iter_y_gd, iter_count_gd = Gradient_Descent ( Grad_f_2, - 2.5, - 0.010, 1, nMax = 25 ) root_nr, iter_x_nr, iter_y_nr, iter_count_nr = Newton_Raphson_Optimize ( Grad_f_2, Hessian_f_2, - 2,. The approach is based on the observation that if a multivariable function is defined and differentiable in a neighborhood of a point, then the function decreases fastest in the direction of the negative gradient.

If instead one takes steps proportional to the positive of the gradient, one approaches a local maximum of that function the procedure is then known as gradient ascent. To find a local minimum of a function using gradient descent, one takes steps proportional to the negative of the gradient (or approximate gradient) of the function at the current point. Gradient descent (or steepest descent) is a first-order iterative optimization algorithm for finding the minimum of a function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed